Since adversarial examples are arguably intuitively related to uncertainty, Bayesian neural networks (BNNs), i.e., neural networks with a probability distribution placed over their weights and biases, have the potential to provide stronger robustness properties. BNNs also enable principled evaluation of model uncertainty, which can be taken into account at prediction time to enable safe decision making. We study probabilistic safety for BNNs, defined as the probability that for all points in a given input set the prediction of the BNN is in a specified safe output set. In adversarial settings, this translates into computing the probability that adversarial perturbations of an input result in small variations in the BNN output, which represents a probabilistic variant of local robustness for deterministic neural networks.

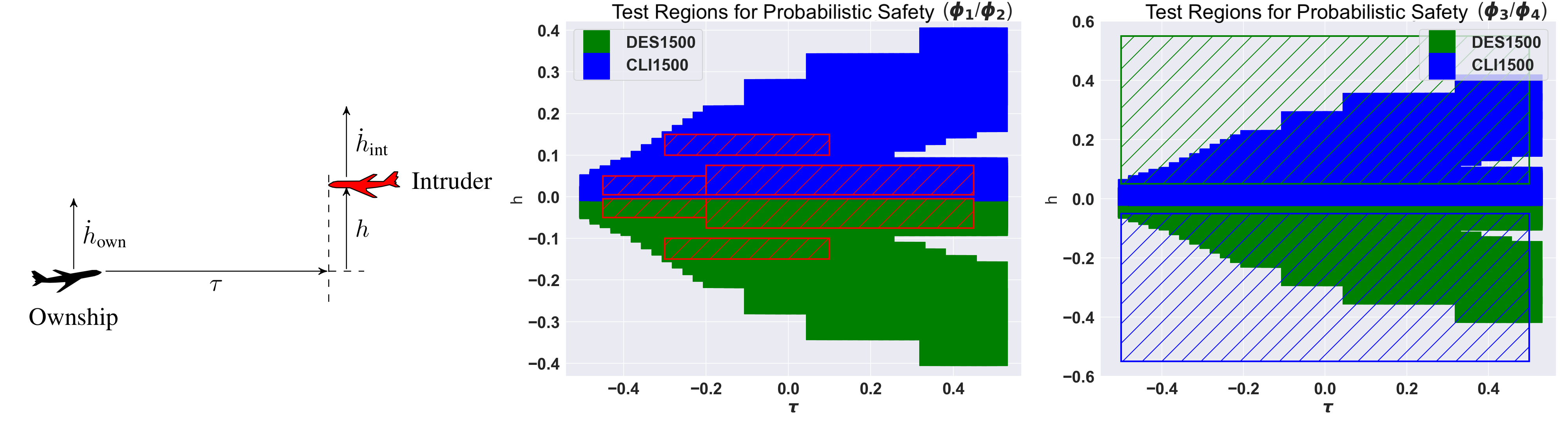

We propose a framework based on relaxation techniques from non-convex optimisation (interval and linear bound propagation) for the analysis of probabilistic safety for BNNs with general activation functions and multiple hidden layers. We evaluate the methods on the VCAS autonomous aircraft controller. The image shows the geometry of VCAS (left), visualisation of ground truth labels (centre) and the computed safe regions (right).

We develop the first principled framework for adversarial training of Bayesian neural networks (BNNs) with certifiable guarantees, enabling applications in safety-critical contexts. We rely on techniques from constraint relaxation of nonconvex optimisation problems and modify the standard cross-entropy error model to enforce posterior robustness to worst-case perturbations in ϵ-balls around input points.

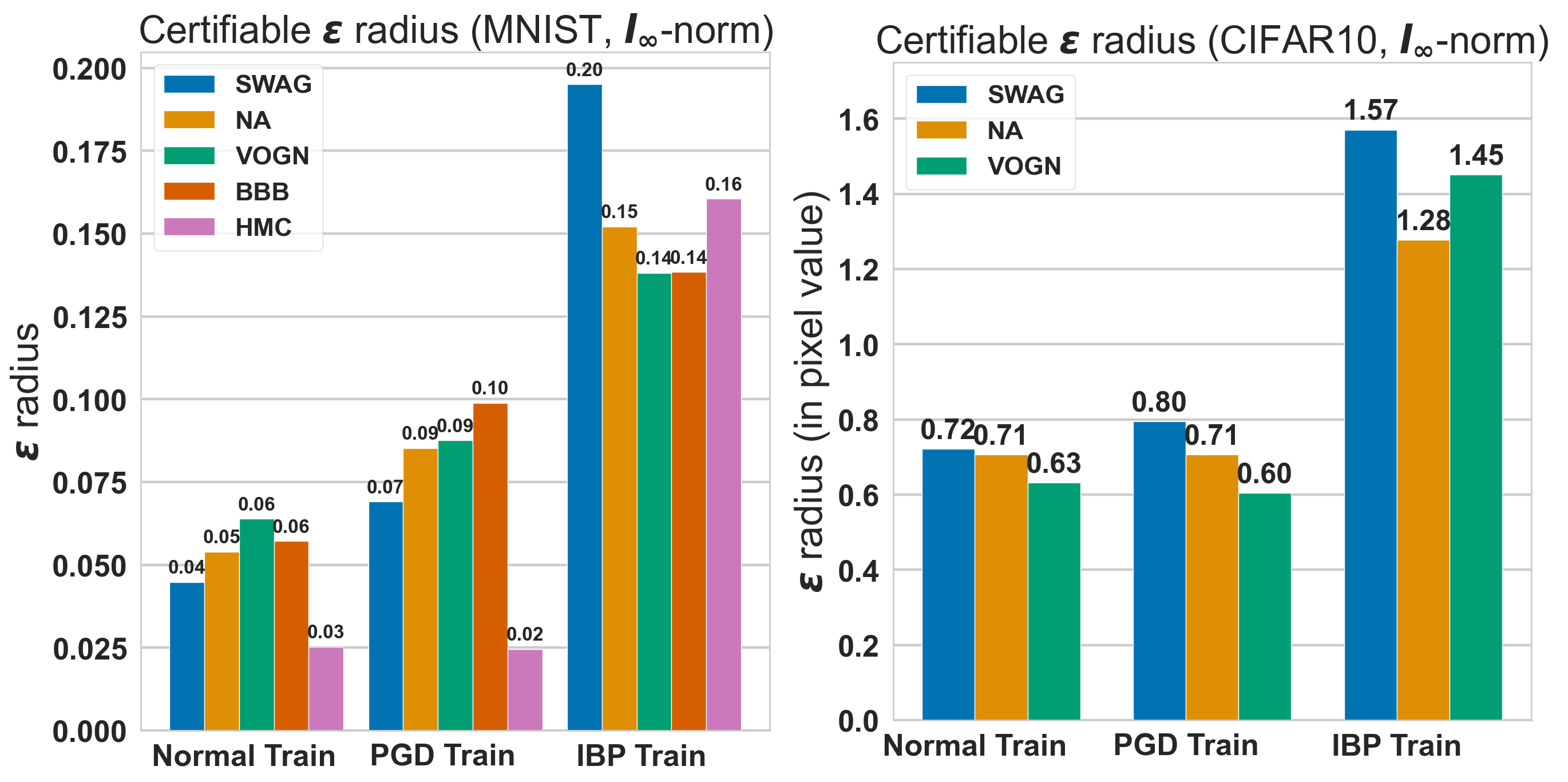

The plot shows the average certified radius for images from MNIST (right), and CIFAR-10 (left) using CNN-Cert. We observe that robust training with IBP (Interval Bound Propagation) roughly doubles the maximum verifiable radius compared with standard training and that obtained by

training on PGD adversarial examples.

To know more about these models and analysis techniques, follow the links below.

Software:DeepBayes